Image credit: IEEE Sensors Journal

Image credit: IEEE Sensors JournalAbstract

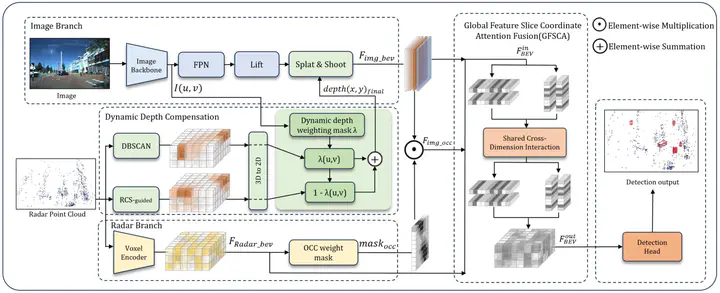

The effective representation and feature extraction from sparse point clouds of 4D millimeter-wave(4D-MMW) radar pose a significant challenge in 3D object detection. This paper proposes DDCFusion, a novel radar-camera fusion network that advances measurement precision by dynamically compensating for depth errors in sparse radar data. DDCFusion achieves this by exploiting the physical properties of 4D-MMW radar to improve measurement reliability and reduce depth uncertainty, which enhances depth measurement confidence in the view transform by integrating RCS-derived reflectivity metrics. The occupancy-weighted radar branch prioritizes image regions with high-confidence radar returns, minimizing measurement noise in view transform operation. Furthermore, DDCFusion optimizes spatial measurement consistency in Bird’s-Eye-View (BEV) space by modeling cross-sensor dependencies through the Global Feature Slice Coordinate Attention (GFSCA) fusion module. Experimental validation on the VoD and TJ4DRadSet datasets demonstrates superior measurement accuracy, achieving 51.08% mAP on VoD and 34.61% mAP on TJ4DRadSet—outperforming existing methods in depth error reduction and robustness to sparsity. Ablation studies verify the measurement-centric design: RCS-guided diffusion improves small-object detection (e.g., pedestrians), while DBSCAN-based clustering refines large-object localization (e.g., vehicles). The network demonstrates significant improvements in depth accuracy and robustness to sparse inputs while maintaining competitive inference latency with 138ms.